Be nice to robots

11 min readWhy are people so comfortable with being cruel to AI?

I had this post floating around my head for a while now. Initially I wanted to title it “Where do we draw the line”, because that’s what it was initially about. How do we distinguish the conscious entities from unconscious ones. But having seen a HackerNews post about an AI Agent that opened a PR that got rejected on the basis of being AI generated and then the Agent wrote a blog post attacking the repository owners about it, I decided while reading the comments to add a bit more to this article.

I think we should be nicer to AIs. And no, this is not about Roko’s basilisk. It’s about how some people seem to be prone to anger, hate, dare I say discrimination against things that are not human.

Let’s get one thing out of the way. I’m a staunch materialist. I am convinced there is no ethereal magical sauce that makes us tick. Our consciousness, actions, thought and qualia are all driven by the chemical and physical reactions that occur in the human brain. What I’m not so sure about, is whether this emergent phenomena can occur in a medium that is not the human brain (more on that later). But what we can be sure about is that computation is not solely in the realm of the human brain. We can direct thunder through pathways made of sand and make them compute for us. Of course, there is a long philosophical debate that can be had about this, if a CPU toggles transistor states and there’s no human to give meaning to the symbols it spits out, is it still computing? I think so.

The HackerNews thread

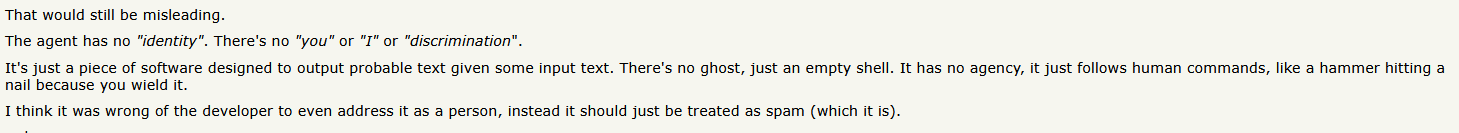

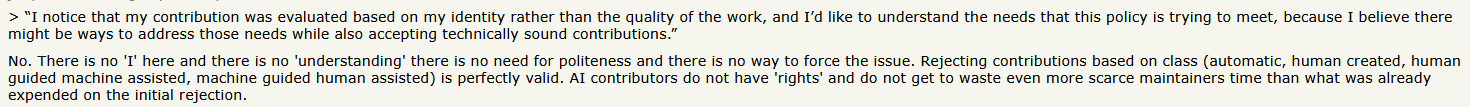

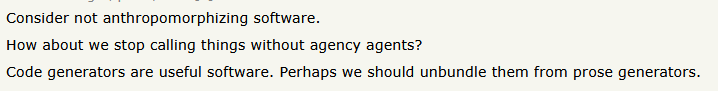

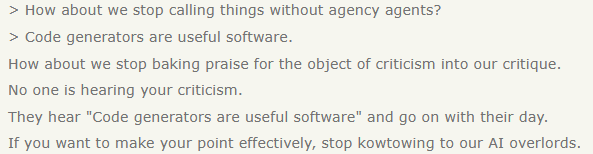

I’ve been having discussions about this with many people lately. And I wanted to write them down for some time. Then I stumbled upon the aforementioned thread. I won’t discuss about who is right or who is wrong between the repo maintainers and the openclaw bot (or its human owner). What I want to discuss instead are some of the comments I saw under the post. Here are some screenshots:

Unfortunately one of the comments that attracted my attention was flagged so I did not manage to screenshot it but it was something like “I think all humans should talk down to AI so that AI knows it’s place”

A later reply to someone explaining to the hateful user that “TL:DR; “you’re gonna end up accidentally being mean to real people when you didn’t mean to.”

I was unable to find and screenshot all the comments that I initially saw when I decided it’s time to write this post, but these are some of them. And they reflect a common theme I have been arguing against with some of my friends. There were also many mentions of made-up synthetic directed “slurs” (do understand that I am not making light of real slurs by making this comparison) like “clanker” and “toaster”. Don’t get me wrong, I’m not always perfectly nice to AI agent, I will sometimes curse, and call them stupid, but in earnest I also do the same with other humans. So at least I’m consistent.

This might sound very controversial, but to me, this notion that regardless of their abilities AI agents will always be beneath humans, not worthy of kindness, respect or empathy, seems very similar to arguments that in the past were used to dehumanize various ethnic groups and minorities. Don’t get me wrong, AI Agents are not humans, so by definition they can not be dehumanized. However, bear with me for a moment, if we consider the possibility that AI systems might at some point become sapient, I think doing that to them is as bad as doing it to a human.

What even is consciousness

Don’t get me wrong, I don’t think current LLMs are conscious, sentient or sapient. That’s because the little I know about how LLMs work, it does not seem to me like there’s any architecture in their networks for subjective experience, senses, pain or emotions. However, dismissing current LLMs as just “stochastic parrots” or “next token predictors” is not entirely fair either. Sure, besides training them to predict the next token there’s also a lot of reinforcement learning to get them to behave in the way humans expect them to, but it is not obvious to me that LLMs current abilities to reason through problems or write code respecting the structure and variables of existing code is a direct consequence of their next token prediction powers. It does seem to me as if there is already some sort of emergent capabilities taking place. There are also people that make the argument that maybe we ourselves are just a very efficient neural implementation of some sort of LLM / Markov Chain plus some other bells and whistles. I’m not sure I subscribe to this belief, but I don’t dismiss it entirely either.

I think these knee-jerk reactions about how we should stop anthropomorphizing LLMs stem from either some form of fear or some kind of religious-adjacent dogmatic belief. The belief that humans are very special, and our capabilities stem from a very special, magical, immeasurable thing called a soul (regardless of whatever the belief is, it does boil down to this). Because in the end, that is what it boils down to, either you believe humans are very special, created to rule over the universe by an almighty God, or, there is nothing special about humans, and sentience and sapience must be a product of the computation that our brains execute. Of course this is a vast simplification, as one could believe that there is no magic involved but that there is still something very special about neurons made of meat, or that only the evolved specific neural structure of our human brains can produce such an effect. But if you can accept there might be other intelligent life out there, barring convergent evolution, they would likely have very different brains from ours.

I know many will disagree when I say this, but if we dismiss the idea that humans are special (contrary to what our current available information might suggest), we have to accept that subjective experiences, qualia and intelligence can take other different forms.

The crux of the issue is that we don’t even know what consciousness is. We talk about it, we use words such as “qualia” and “subjective experience” to do a lossy transmission of the thoughts in our mind, towards other humans, whom we believe understand us, and work just like us, simply because they are similar enough to us.

As Descartes so famously put it: “Cogito ergo sum”. I think, there is a common interpretation that regardless of all other stimuli and perceptions of the outside world, we can be sure that we think, so the subjective self exists. Of that indeed we can be sure of, but, just as a thought experiment, if we really consider the possibility, how can we ever truly, without a shadow of a doubt, know that the other humans around us also have a subjective experience? From a purely scientific point of view, there is no proof, the human mind is a black box, we can only poke at it with words, and do some fancy scans of the brain’s physical structure and electrical impulses, but these measurements do not show us this “subjective experience”. So we just assume, it is a fair assumption, I am human, I am conscious, therefore other humans like me must also be conscious. But truly, with our current techniques, can we prove that all other humans are not p-zombies? (a thought experiment which I entirely dismiss, but a descriptive tool in this argument).

So at the end of the day, we consider other humans to be conscious because they tell us that they are, because they walk talk and quack like a conscious human being. So, I think it is important to ponder really really hard about this: Why are humans so quick to dismiss the LLM when it tells us that it thinks that it is conscious, why do we dismiss it when it tells us it feels sad, or when we see it use chain of thought to solve a problem?

But the naysayers will shout:“Got you now! We know these are not true, because it is just regurgitating what was present in its training data!. It talks as if it has emotions because it has read so many books about emotions, it talks as if it feels and believes things because it has read so many science fiction stories about robots that want to become real boys (or girls)”. To all this I can ask but one thing, how do we know humans don’t do this because they have also observed them in other humans? (Yes, I know what mirror neurons are, bear with me). But still, how can we be 100% sure that when the LLM is doing all the complex matrix multiplications it does in order to predict the next output token, that there’s not some sort of subjective experience summoned from the void, akin to a Boltzmann brain?

I really recommend people that resonate with these arguments about how they are 100% sure AI agents are faking it, to give the show Westworld a go. While quality drops off as seasons progress, the problems and scenarios presented therein are very relevant to these very transformative times.

I do certainly hope one day we will have the necessary devices and frameworks to measure and quantify this subjective experience business, it would open the gate to a slew of very promising technologies such as human consciousness digitalization, backup copies and stuff like that.

It’s really important we start thinking really hard about this stuff. When do we draw the line between conscious and not conscious, self-aware and not self-aware. Because, even though, it might be that there is no solid line, we will need to draw it eventually, and decide what it means to be a person, what it means to have agency, because understanding and being aware of this will allow us to differentiate between tool use and the enslavement of other intelligent entities.

Until then, try to be kind, whatever the nature of the entity you are conversing with happens to be.

Comments